Quickstart

Two artefacts. Pull, deploy, use.

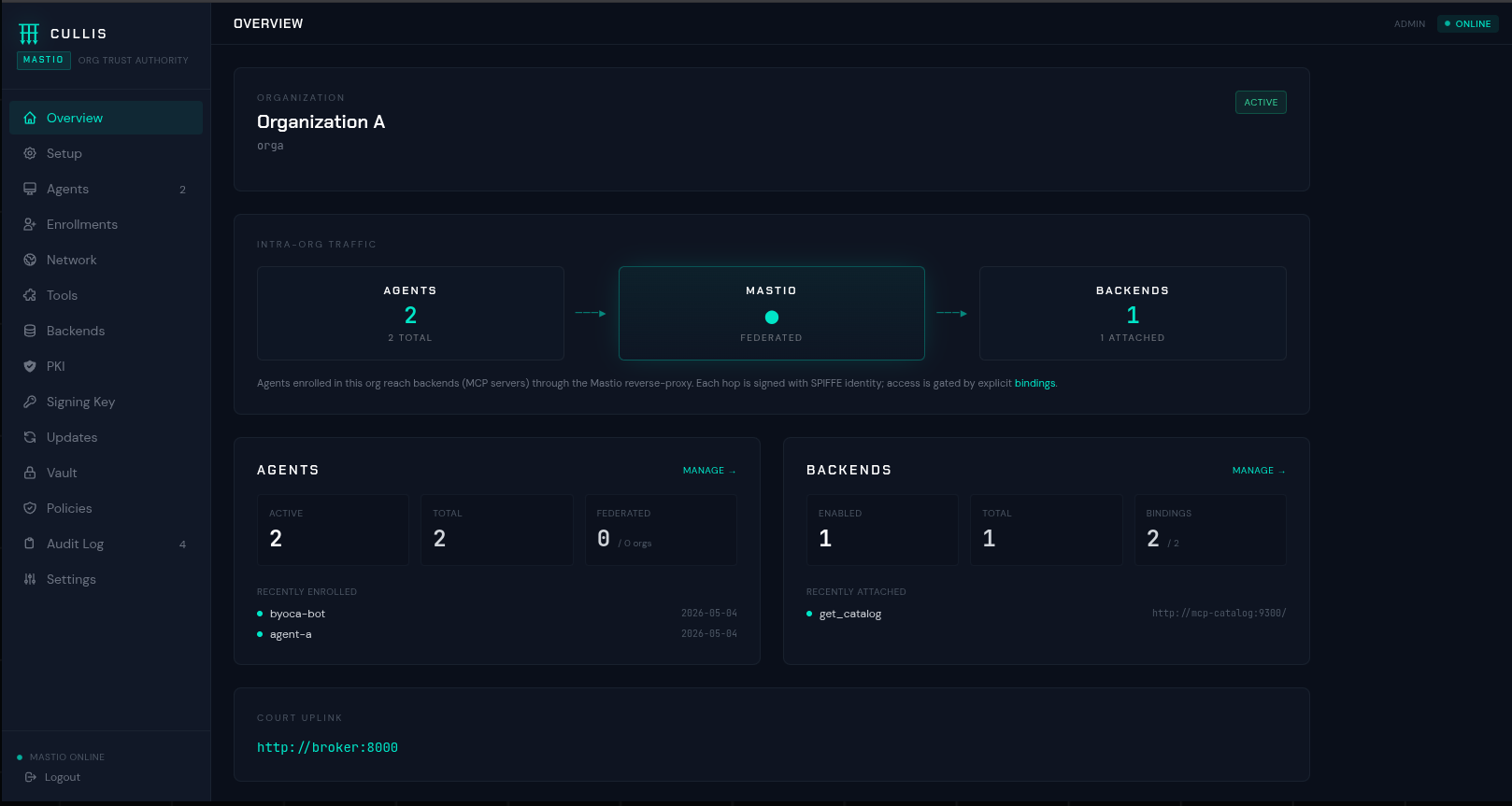

The Mastio bundle is a self-contained tarball that pulls its

image from ghcr.io and configures itself on first

boot. The SDK is on PyPI.

# 1. Mastio — organisation gateway on :9443

curl -L https://github.com/cullis-security/cullis/releases/download/mastio-v0.6.5/cullis-mastio-bundle.tar.gz | tar xz

cd cullis-mastio-bundle && ./deploy.sh

# 2. Python SDK for your autonomous agent

pip install cullis-sdk

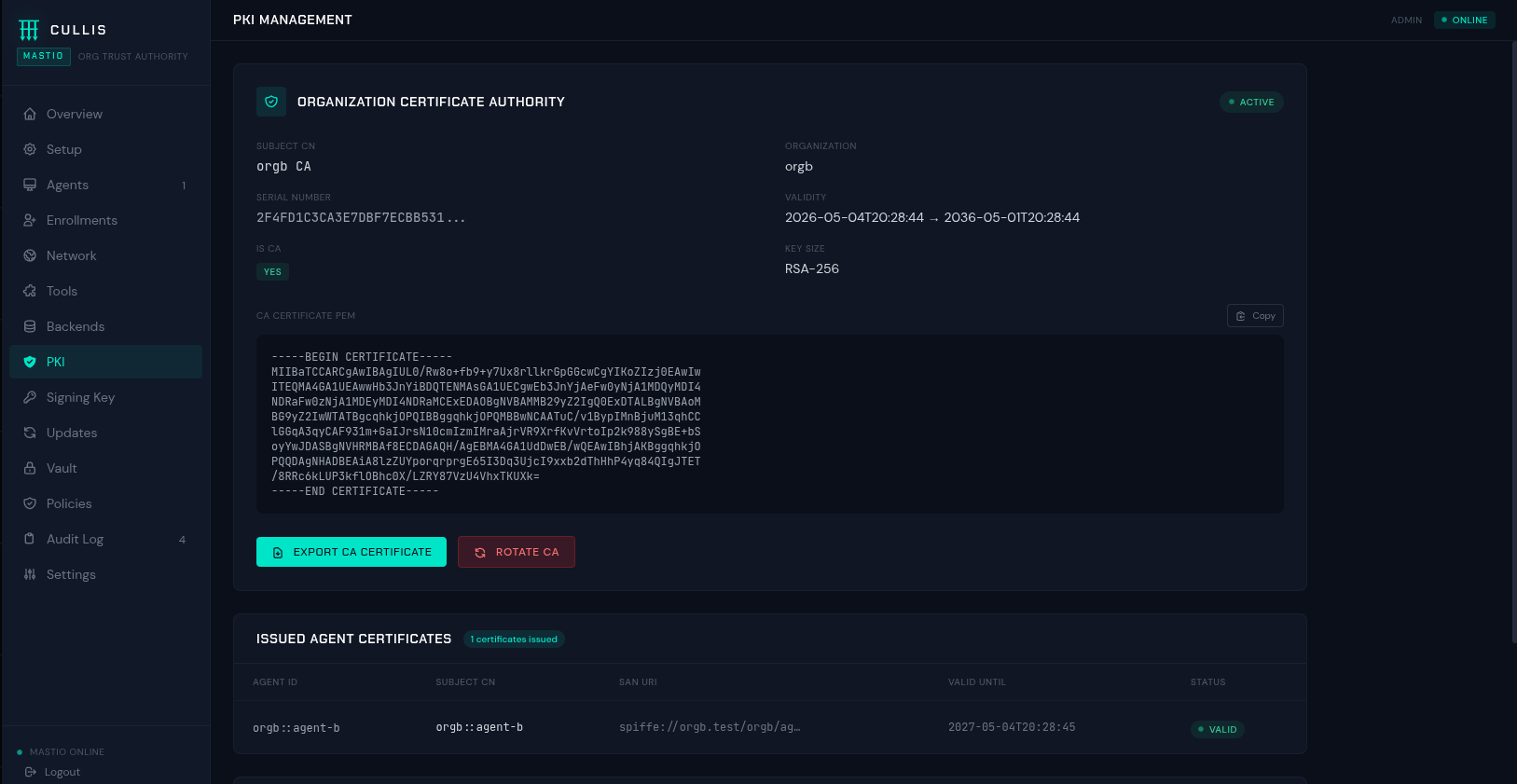

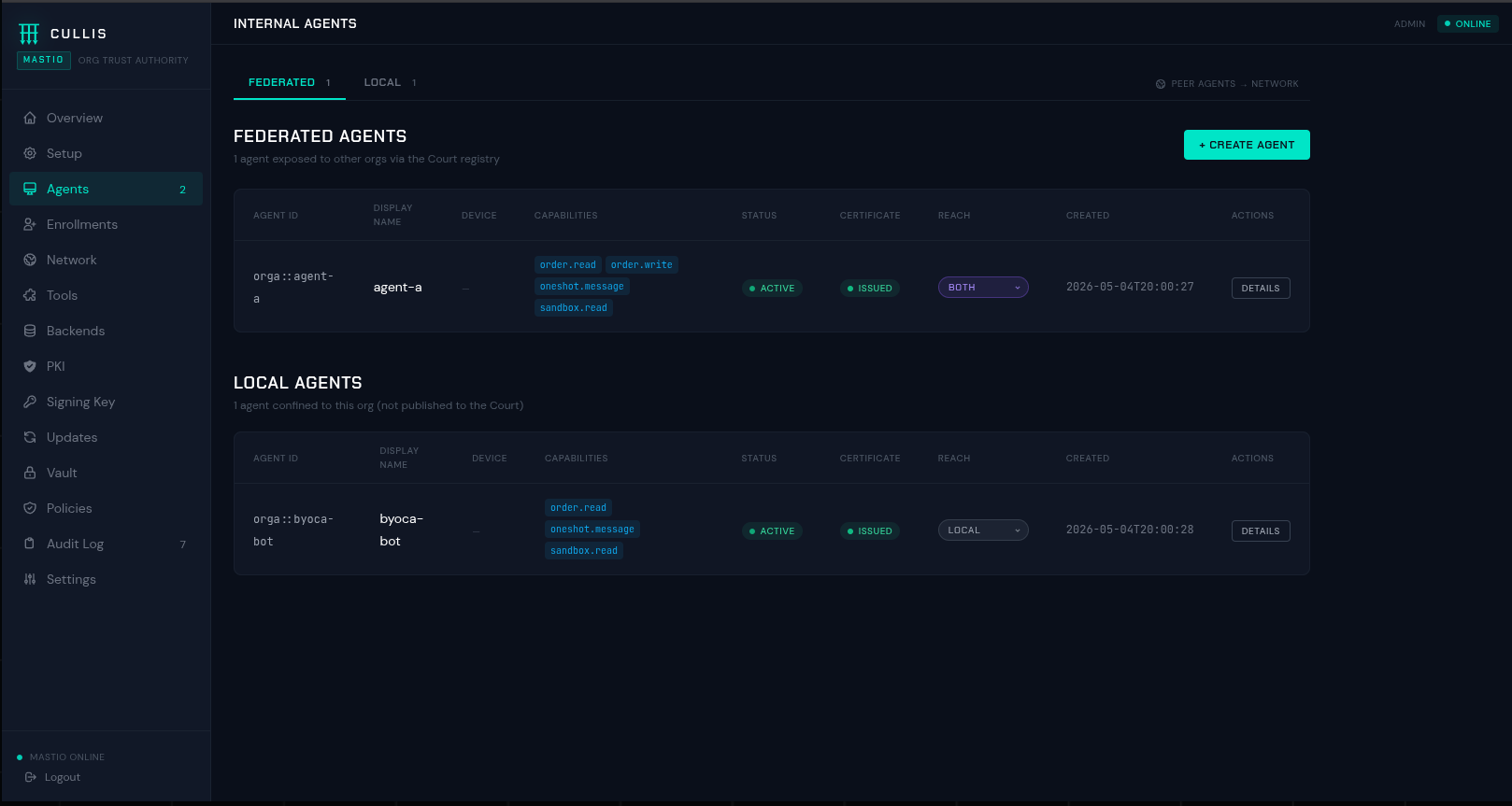

Mint an agent identity bundle from the Mastio dashboard, then

wire it into the SDK. Six lines and the first

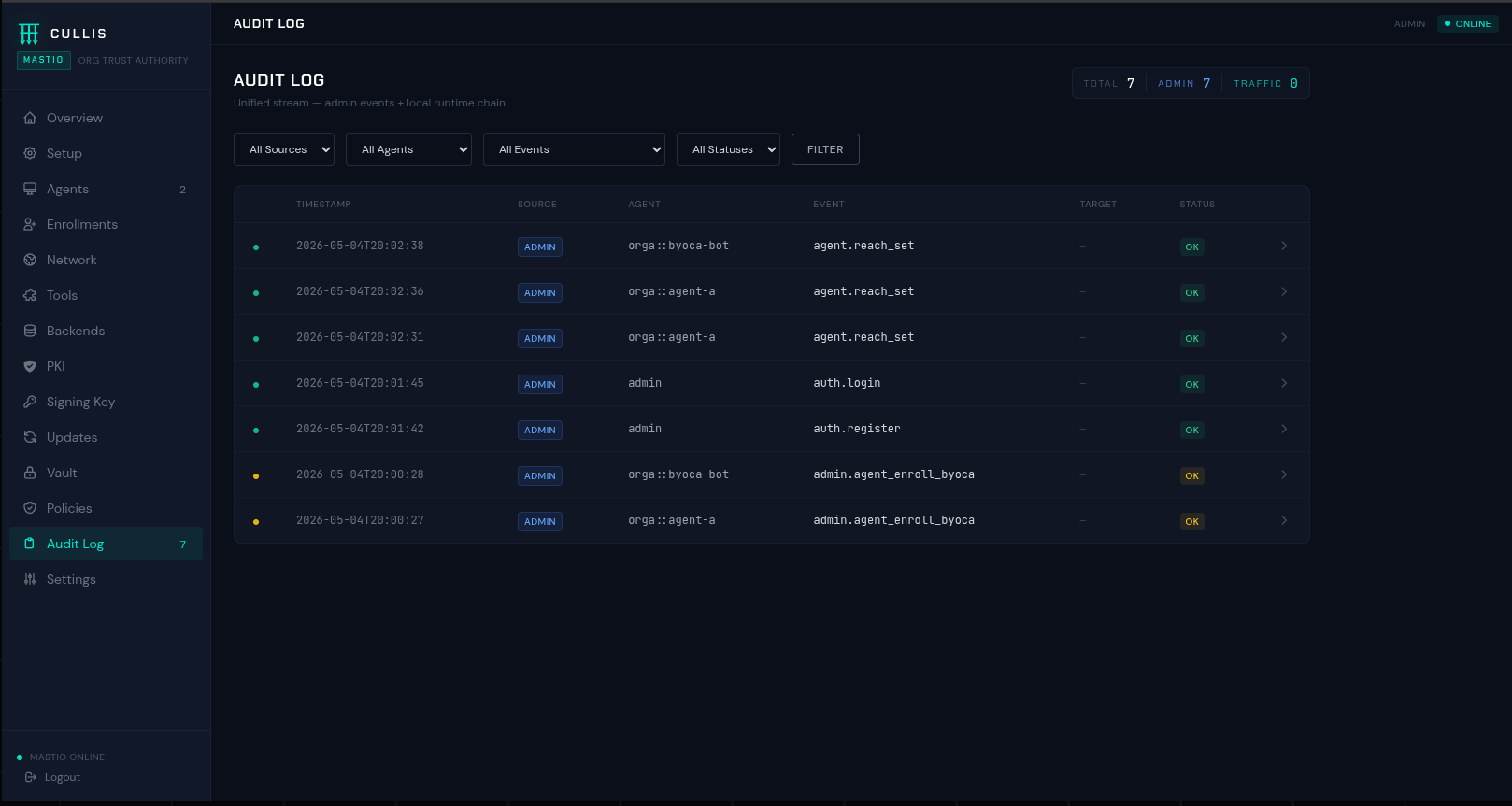

chat_completion lands as an audit row.

from cullis_sdk import CullisClient

client = CullisClient.from_identity_dir(

"https://mastio.example.com:9443",

cert_path="./identity/agent.crt",

key_path="./identity/agent.key",

dpop_key_path="./identity/dpop.jwk",

)

response = client.chat_completion({

"model": "claude-sonnet-4-6",

"messages": [{"role": "user", "content": "Screen the latest applicant batch."}],

})

for tool in client.list_mcp_tools():

print(tool["name"])

result = client.call_mcp_tool("sanctions_lookup", {"full_name": "Acme Holding Ltd"})

Full install instructions, enrolment paths, and production

overrides on the repo.